Anthropic researchers efficiently recognized thousands and thousands of ideas inside Claude Sonnet, certainly one of their superior LLMs.

AI fashions are sometimes thought of black bins, that means you possibly can’t ‘see’ inside them to know precisely how they work.

Whenever you present an LLM with an enter, it generates a response, however the reasoning behind its decisions isn’t clear.

Your enter goes in, and the output comes out – and even the AI builders themselves don’t actually perceive what occurs inside that ‘field.’

Neural networks create their very own inside representations of data after they map inputs to outputs throughout information coaching. The constructing blocks of this course of, known as “neuron activations,” are represented by numerical values.

Every idea is distributed throughout a number of neurons, and every neuron contributes to representing a number of ideas, making it tough to map ideas on to particular person neurons.

That is broadly analogous to our human brains. Simply as our brains course of sensory inputs and generate ideas, behaviors, and recollections, the billions, even trillions, of processes behind these features stay primarily unknown to science.

Anthropic’s examine makes an attempt to see inside AI’s black field with a way known as “dictionary studying.”

This includes decomposing complicated patterns in an AI mannequin into linear constructing blocks or “atoms” that make intuitive sense to people.

Mapping LLMs with Dictionary Studying

In October 2023, Anthropic utilized this methodology to a tiny “toy” language mannequin and located coherent options comparable to ideas like uppercase textual content, DNA sequences, surnames in citations, mathematical nouns, or operate arguments in Python code.

This newest examine scales up the method to work for as we speak’s bigger AI language fashions, on this case, Anthropic‘s Claude 3 Sonnet.

Right here’s a step-by-step of how the examine labored:

Figuring out patterns with dictionary studying

Anthropic used dictionary studying to investigate neuron activations throughout varied contexts and determine frequent patterns.

Dictionary studying teams these activations right into a smaller set of significant “options,” representing higher-level ideas discovered by the mannequin.

By figuring out these options, researchers can higher perceive how the mannequin processes and represents data.

Extracting options from the center layer

The researchers centered on the center layer of Claude 3.0 Sonnet, which serves as a essential level within the mannequin’s processing pipeline.

Making use of dictionary studying to this layer extracts thousands and thousands of options that seize the mannequin’s inside representations and discovered ideas at this stage.

Extracting options from the center layer permits researchers to look at the mannequin’s understanding of data after it has processed the enter earlier than producing the ultimate output.

Discovering numerous and summary ideas

The extracted options revealed an expansive vary of ideas discovered by Claude, from concrete entities like cities and other people to summary notions associated to scientific fields and programming syntax.

Curiously, the options have been discovered to be multimodal, responding to each textual and visible inputs, indicating that the mannequin can study and characterize ideas throughout totally different modalities.

Moreover, the multilingual options counsel that the mannequin can grasp ideas expressed in varied languages.

Analyzing the group of ideas

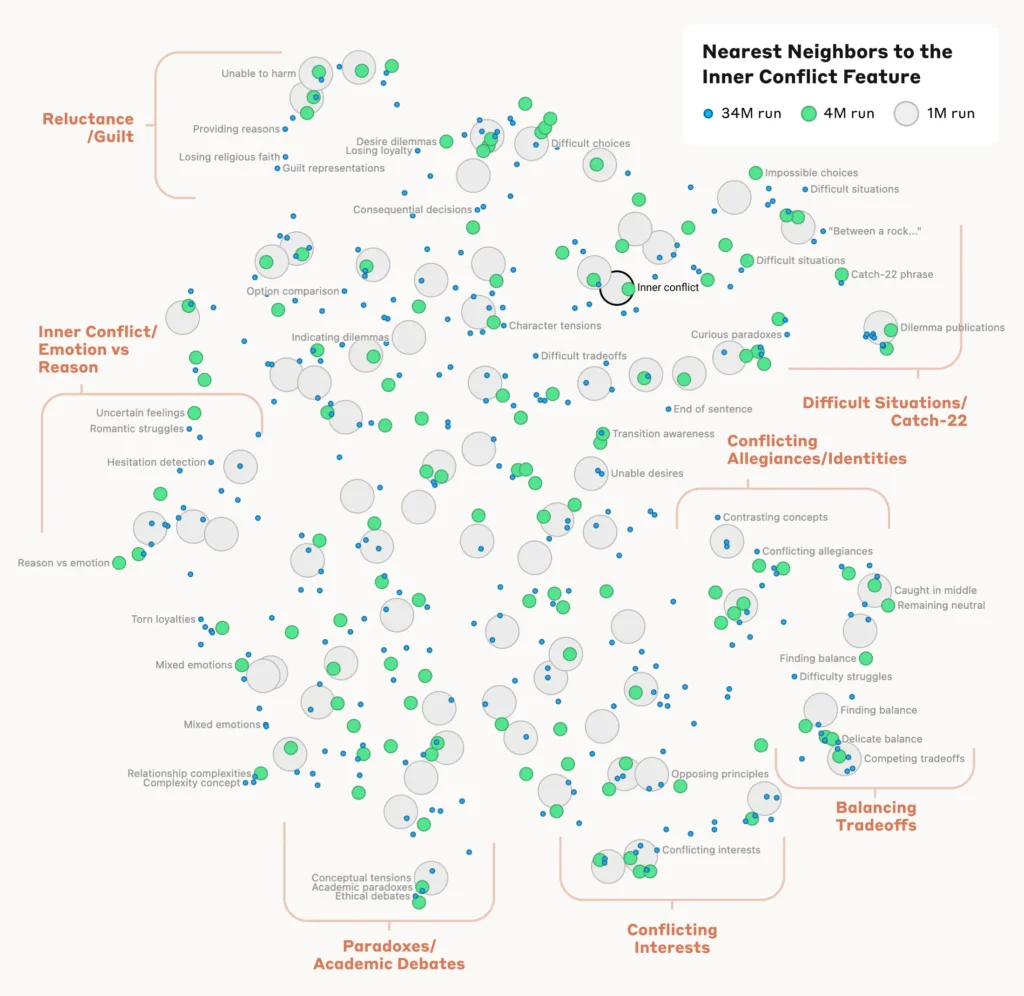

To know how the mannequin organizes and relates totally different ideas, the researchers analyzed the similarity between options primarily based on their activation patterns.

They found that options representing associated ideas tended to cluster collectively. For instance, options related to cities or scientific disciplines exhibited larger similarity to one another than to options representing unrelated ideas.

This means that the mannequin’s inside group of ideas aligns, to some extent, with human intuitions about conceptual relationships.

Verifying the options

To verify that the recognized options immediately affect the mannequin’s conduct and outputs, the researchers performed “characteristic steering” experiments.

This concerned selectively amplifying or suppressing the activation of particular options in the course of the mannequin’s processing and observing the influence on its responses.

By manipulating particular person options, researchers may set up a direct hyperlink between particular person options and the mannequin’s conduct. For example, amplifying a characteristic associated to a selected metropolis prompted the mannequin to generate city-biased outputs, even in irrelevant contexts.

Why interpretability is essential for AI security

Anthropic’s analysis is basically related to AI interpretability and, by extension, security.

Understanding how LLMs course of and characterize data helps researchers perceive and mitigate dangers. It lays the inspiration for growing extra clear and explainable AI programs.

As Anthropic explains, “We hope that we and others can use these discoveries to make fashions safer. For instance, it may be doable to make use of the methods described right here to observe AI programs for sure harmful behaviors (similar to deceiving the consumer), to steer them in direction of fascinating outcomes (debiasing), or to take away sure harmful material completely.”

Unlocking a larger understanding of AI conduct turns into paramount as they grow to be ubiquitous for essential decision-making processes in fields similar to healthcare, finance, and legal justice. It additionally helps uncover the foundation reason behind bias, hallucinations, and different undesirable or unpredictable behaviors.

For instance, a latest examine from the College of Bonn uncovered how graph neural networks (GNNs) used for drug discovery rely closely on recalling similarities from coaching information somewhat than actually studying complicated new chemical interactions. This makes it robust to know how precisely these fashions decide new compounds of curiosity.

Final 12 months, the UK authorities negotiated with main tech giants like OpenAI and DeepMind, searching for entry to their AI programs’ inside decision-making processes.

Regulation just like the EU’s AI Act will strain AI corporations to be extra clear, although business secrets and techniques appear certain to stay underneath lock and key.

Anthropic’s analysis gives a glimpse of what’s contained in the field by ‘mapping’ data throughout the mannequin.

Nonetheless, the reality is that these fashions are so huge that, by Anthropic’s personal admission, “We predict it’s fairly seemingly that we’re orders of magnitude quick, and that if we needed to get all of the options – in all layers! – we would want to make use of way more compute than the overall compute wanted to coach the underlying fashions.”

That’s an attention-grabbing level – reverse engineering a mannequin is extra computationally complicated than engineering the mannequin within the first place.

It’s harking back to vastly costly neuroscience initiatives just like the Human Mind Mission (HBP), which poured billions into mapping our personal human brains solely to finally fail.

By no means underestimate how a lot lies contained in the black field.